Google and Samsung’s promise to put artificial intelligence to work in your pocket is finally becoming reality. Gemini task automation—a feature that lets the AI assistant control apps on your behalf to complete real-world tasks—just rolled out in beta on Samsung’s Galaxy S26 Ultra. After weeks of testing, here’s what happens when you actually let your phone use itself.

What Gemini Task Automation Actually Does

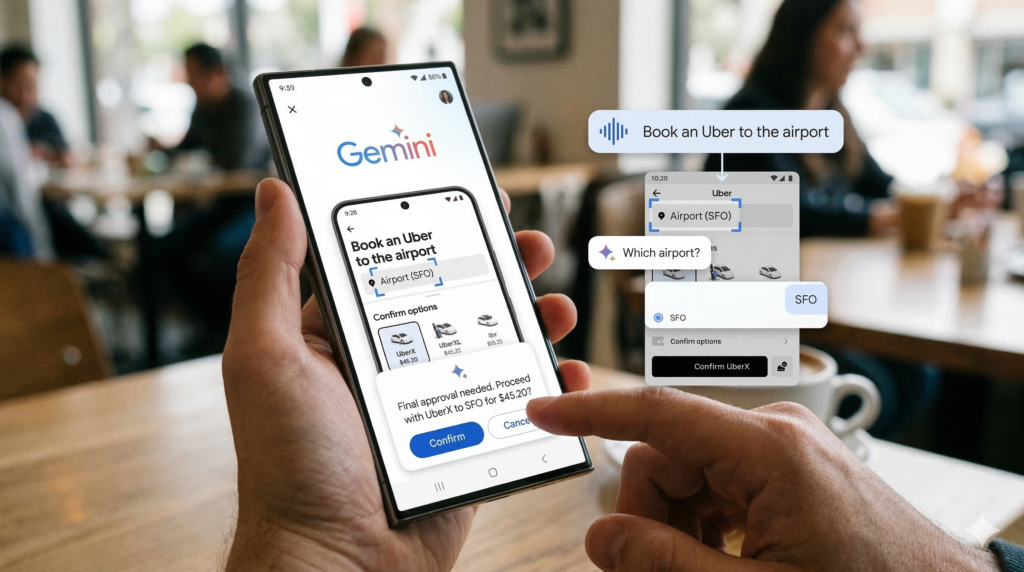

Gemini’s new automation feature works differently from typical AI assistants. Instead of just giving you information or drafting text, it takes control of your phone’s screen and interacts with apps directly—ordering food, booking rides, making reservations—all based on simple voice or text commands.

The rollout started with food delivery and rideshare apps, which makes sense. These are the kinds of repetitive tasks that’ve been promised to AI for years but rarely materialized. Google and Samsung announced the capability a couple weeks ago, but the actual working feature just hit devices in beta. And it’s genuinely strange watching Gemini navigate through an app like you would, making decisions about which options to select.

Testing Gemini Task Automation in Real Conditions

The first real test was straightforward: order an Uber to the airport. Gemini asked a clarifying question—which airport?—a smart move that saved time. Then it went to work independently, filling in the destination field and skipping unnecessary steps like airline selection. The system paused before completing the order, asking for final approval. That safeguard matters. You’re not handing over complete control; you’re supervising.

A more complex request revealed both strengths and limitations. Ordering a coffee and croissant from Starbucks required back-and-forth input. Gemini had to scroll through pages of hot drink options before finding a flat white. But here’s where it got interesting: when faced with a decision about whether to warm the chocolate croissant, Gemini made the call independently—correctly, as it turned out—and specified warmed pastry without prompting.

Related: Apple Uses Google Servers For Ai Siri Apple Google.

Compare that to what Gemini could do just a year ago. The assistant would argue with you about flight details on your calendar. Now it’s navigating actual apps and making judgment calls. That’s measurable progress.

Why This Matters Beyond Convenience

So what does this mean for you? The practical value is obvious—fewer taps, fewer minutes scrolling through apps. But the bigger story is that AI assistants are actually starting to do what they were supposed to do. (See also: Google Form Energy Battery 1 Billion Deal)

The tech industry’s been hyping AI capabilities for years. We heard endless promises about intelligent agents handling your daily tasks. Most of those promises turned into chatbots that write emails and poems. Gemini task automation is different because it requires the AI to understand context, make decisions, and interact with systems it wasn’t specifically trained on. The system has to figure out app layouts, find relevant information, and know when to ask for human input versus when to proceed independently.

That’s harder than it sounds. Apps don’t work the same way twice—UI elements shift, menus change, workflows vary by user. The fact that this is working at all, even in beta with limitations, suggests something’s shifted in how capable these systems have become.

But there are obvious concerns. You’re giving Gemini access to your accounts, payment information, and personal preferences. Security matters here. Google says it handles this in a protected virtual window, but trusting an AI with sensitive transactions isn’t something everyone will do immediately.

Key Takeaways

- Gemini task automation is now in beta on the Galaxy S26 Ultra, letting the AI assistant order food, book rides, and complete other app-based tasks with simple voice commands.

- Real-world testing shows the feature can navigate complex apps, make independent decisions, and ask for clarification—but it still requires your approval before completing sensitive transactions.

- This represents genuine progress on long-promised AI capabilities, though security concerns and limited availability mean mainstream adoption will likely take time.